Gravitational Lenses measure Universe Expansion

It's one of the big cosmology debates: the universe is expanding, but how fast exactly? Two available measurements yield different results. Leiden physicist David Harvey adapted an independent third measurement method, using the light warping properties of galaxies predicted by Einstein. He published about it in the Monthly Notices of the Royal Astronomical Society.

We've known for almost a century about the expansion of the universe. Astronomers noted that the light from faraway galaxies has a lower wavelength than that of galaxies close by. The light waves seem stretched, or redshifted, which means that those far galaxies are moving away.

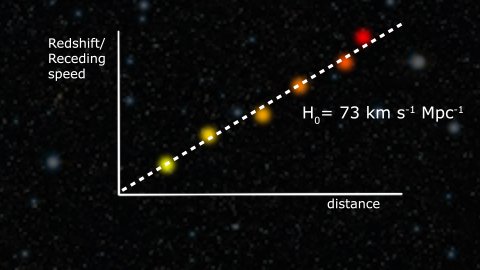

This expansion rate, or Hubble constant, can be measured. Certain supernovas, or exploding stars, have a well-understood brightness, this makes it possible to estimate their distance from earth, and relate that distance to their redshift or speed. For every megaparsec of distance (a parsec is 3.3 light-years), the speed that galaxies recede from us, increases with 73 kilometres per second.

However, increasingly accurate measurements of the Cosmic Microwave Background, a remnant from light in the very early universe, yielded a different Hubble constant: about 67 kilometres per second.

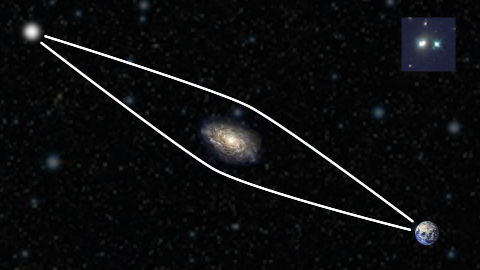

-

The source's light reaches earth through multiple paths -

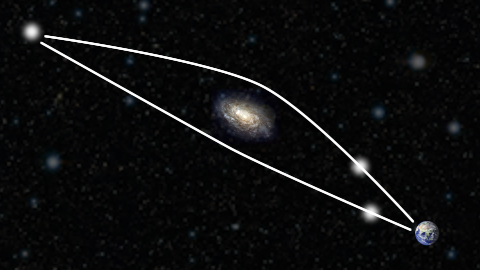

When the lens is off-center, one path is shorter -

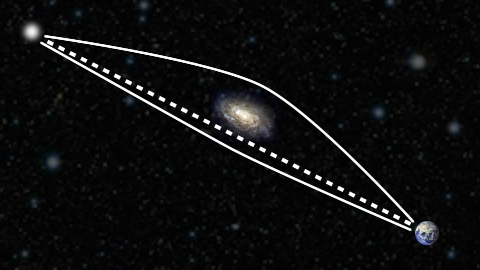

Variations from the source will arrive at different times, allowing to measure the distance -

Comparing distance to redshift/speed fixes the Hubble constant

Einstein

How can that be? Why the difference? Could this difference tell us anything new about the universe and physics? 'This', says Leiden physicist David Harvey, 'is why a third measurement, independent from the other two, has come into view: gravitational lenses.'

Albert Einstein’s theory of General Relativity predicts that a concentration of mass, such as a galaxy, can bend the path of light, much like a lens does. When such a galaxy is in front of a bright light source, the light is bent around it. It can reach earth via different routes, which gives us two, sometimes even four images of the same source.

HoliCOW

In 1964, the Norwegian astrophysicist Sjur Refsdal had an a-ha moment: when the lensing galaxy is a bit off-center, one route is longer than the other. That means that the light takes longer by that path. So when there is a variation of the brightness of the quasar, this blip will be visible in one image before the other. The difference could be days, or even weeks or months.

This timing difference, Refsdal showed, can also be used to pin down distances to the quasar and the lens. Comparing these with the redshift of the quasars gives you an independent measurement of the Hubble constant.

A research collaboration under the HoliCOW used six of these lenses to narrow down the Hubble constant to about 73. However, there are complications: apart from the distance difference, the mass of the foreground galaxy has a delaying effect too, depending on the exact mass distribution. 'You have to model that distribution, but a lot of unknowns remain,' says Harvey. Uncertainties like this limit the accuracy of this technique.

Imaging the whole sky

This could change when a new telescope will be online in Chile in 2021, dedicated to imaging the whole sky every few nights. This Vera Rubin Observatory is expected to see thousands of double quasars, offering a chance to narrow down the Hubble constant even further.

Harvey: 'The problem is: modelling all those foreground galaxies individually is impossible, computationally.' So instead, Harvey designed a method to calculate the average effect of a full distribution of up to a thousand lenses.

'In that case, individual quirks of the gravitational lenses are not that important, and you don't have to do simulations for all the lenses. You just have to make sure that you model the entire population', says Harvey.

Due to the selected cookie settings, we cannot show this video here.

Watch the video on the original website or'In the paper, I show that with this approach, the error in the Hubble constant thresholds at 2 percents, when you approach about thousands of quasars.'

This error margin will allow a meaningful comparison between the several Hubble constants, and could help in understanding the discrepancy. 'And if you want to go below those 2 percents, you have to improve your model by doing better simulations. My guess is that this would be possible'

David Harvey, ‘A 4 per cent measurement of H0 using the cumulative distribution of strong lensing time delays in doubly imaged quasars’, MNRAS 498, 2871–2886 (2020) doi:10.1093/mnras/staa2522